The Model Isn't the Product. The Memory Is.

- Rich Washburn

- 21 hours ago

- 5 min read

The developer community just had a collective realization in April 2026. It's been spreading through forums, Substacks, and builder channels for the past few weeks. And if you've been following the conversation around autonomous AI agents, you've probably seen some version of this argument surface:

The model doesn't matter as much as everyone thought. The loop does.

I've been saying a version of this for three years. Not as a theory. As a lived operational reality.

What the Developer Community Just Figured Out

Here's the core insight that's landing across the agentic AI space right now: when you build a serious agent runtime — one capable of multi-step orchestrated workflows, durable task loops, memory with provenance, and real channel delivery — you realize the model is just a reasoning engine inside a much larger operating loop.

Swap the model. The loop continues. This sounds obvious in retrospect. It's not obvious until you've built something that outlives a single conversation, a single session, a single provider policy change. Then it becomes viscerally clear: the model was never the product. The memory was. The structure was. The continuity was.

What the builders are discovering now is that if your memory lives inside one model's context window, you're locked. If it lives inside one platform's subscription, you're one pricing change away from a broken workflow. If it lives in a chat transcript, you have retrieval sludge, not operational memory. The architectural unlock is moving memory outside the model entirely — into a structured, user-owned layer that any reasoning engine can read, write to, and build on. That's what makes a workflow durable. That's what makes an agent feel less like a demo and more like infrastructure.

I Wasn't Waiting for April

Three years ago, I started building something I call ARIA — not as a product, not as a startup, but as an experiment in cognitive architecture. The question I was trying to answer wasn't "can AI help me work faster?" It was something more specific: what happens when the structure itself produces the behavior? I wrote about the fruit fly experiment — the one where researchers recreated the neural wiring of a fruit fly inside a simulation and didn't program it to do anything. The simulated fly just... behaved like a fly. Structure produced behavior. That's the thesis I've been testing with AI systems for three years.

When you build the right architecture on top of a reasoning engine — the right memory model, the right continuity layer, the right operational structure — something different starts to happen. The system doesn't just respond. It continues. It doesn't just retrieve. It reasons across accumulated context. It doesn't just answer. It models you. That last part was the one that surprised me most.

After enough accumulated context — enough decision cycles, enough strategic pivots, enough late-night work sessions captured and processed — the system began reflecting my own cognitive patterns back at me with a clarity I hadn't expected. Not flattery. Not personalization tricks. Something more like an executive mirror. It told me things I needed to hear about how I operate. That's when I started using the phrase "fiduciary intelligence" — because a system that's structurally aligned with your interests will eventually tell you what you need to hear, not just what you asked for. That's qualitatively different from a chatbot.

The Business Case Is in the Receipts

I published the numbers a few weeks ago. Fourteen days of running a fully operational cognitive support layer alongside my content and communication work. Not replacing my judgment — running alongside it.

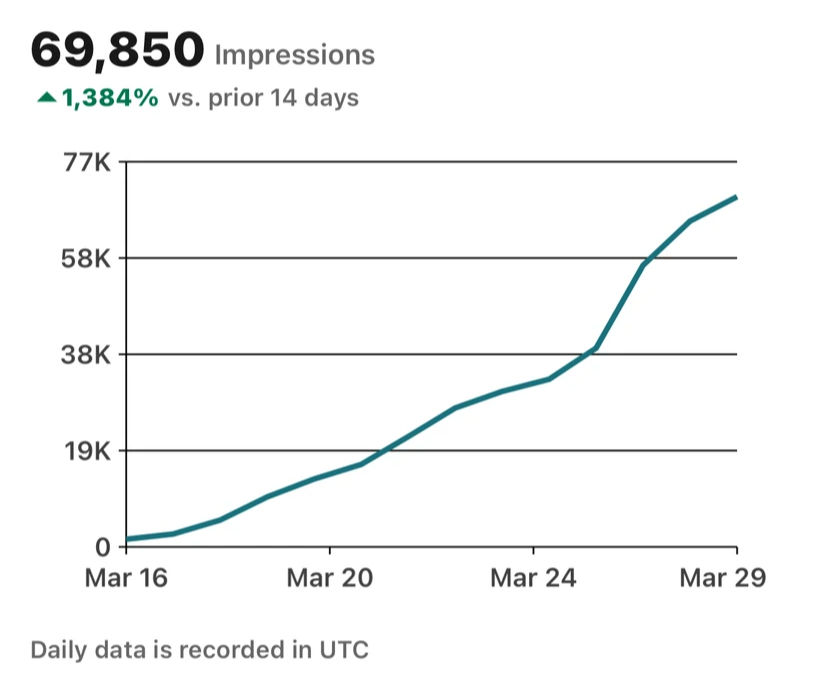

The results weren't incremental. 69,850 impressions over 14 days. 1,384% impression growth over the prior period. 1,062 engagements. And more importantly: the operational overhead of running my content, communications, and strategic output dropped dramatically.

The posts that performed best weren't the most polished. They were the ones with the sharpest signal — which is what happens when you have a system that understands your voice, your thesis, your patterns, and your audience, instead of generating generic AI content on command. But the numbers aren't the real story. The real story is the experience described in "Business at the Speed of Thought." The meeting that happened in a car, unscheduled, while moving. One tap. By the time I pulled into my next stop, the meeting was transcribed, processed into a tactical brief, distributed to the team, and a fully branded deck was sitting in everyone's inbox. I didn't type a word. That's not a productivity hack. That's a different relationship with time.

What the Developer Community Is Catching Up To

The insight rippling through the builder community right now is exactly this: serious agentic work requires memory that outlives the session. It requires workflows that outlive the model. It requires structure that produces behavior regardless of which reasoning engine you plug in.

They're calling it "durable workflows" and "brain-swappable runtimes." They're debating memory provenance — was this observed from a real source? Was it confirmed by a user? Is it stale? Should it scope to a particular task?

These sound like technical questions. They're not. They're operational questions. And they're the same questions I was working through three years ago when I was trying to figure out why the early AI tools felt like vending machines — insert prompt, get output, context gone — and what it would take to build something that felt more like a trusted collaborator.

The answer was always the memory layer. The answer was always the architecture.

The Strategic Layer Nobody's Naming Correctly

Here's the part that I think gets underestimated in most of the current conversation: the memory layer isn't just a technical component. It's a competitive moat.

When a system has accumulated six months of your decision patterns, your communication style, your strategic priorities, your stakeholder relationships, your failed experiments, and your operational rhythm — that is an asset. It doesn't live in the model. It lives in the structure you've built around the model.

That structure is what the developer community is now calling a "durable workflow." I've been calling it a cognitive operating system. The name doesn't matter. The principle does. The people who build that structure — who invest in the memory layer, the continuity layer, the provenance framework, the operational architecture — will have a system that compounds over time. Every session adds context. Every decision adds signal. Every output refines the model's understanding of how you think and what you're building.

The people who don't build that structure will keep living in single-session AI — slightly better search, slightly faster drafting, but fundamentally no different from the vending machine they started with.

Where This Goes

The April 2026 developer conversation is pointing toward something the enterprise world hasn't fully priced yet: the most valuable AI configuration isn't the most powerful model. It's the most deeply integrated loop. Not the biggest context window. The deepest operational memory. Not the fastest generation speed. The most durable workflow structure. Not the most impressive demo. The most reliable continuity.

The labs will keep fighting over which model is best. OpenAI will keep expanding access. Anthropic will keep managing compute constraints. New models will emerge, get cheaper, get more capable, get commoditized. The model war is real and it will continue. But the architecture war is quieter and it's the one that compounds.

I've watched this pattern play out across every serious deployment of this kind of system. The first thing people notice isn't the AI capability. It's the relief. The feeling that things aren't slipping. That the context is held. That the work continues even when attention fragments. That relief isn't a feature of the model. It's a feature of the structure and structure, as it turns out, is behavior.

Rich Washburn is a technology strategist and AI infrastructure advisor working at the intersection of cognitive architecture, AI deployment, and operational leverage.

Comments